_________________________________________________________________________________________

Table Extensions are a new feature that has been released with Tableau version 2022.3. They allow us to create new data tables from analytic extension scripts. In this blog, we will be exploring deeper what Table Extensions are and what benefits they bring. As well as the syntax that needs to be followed with some examples along the way. Without further ado, let’s get into Table Extensions. As for the examples, we will be focusing on the Python scripting language with the Tabpy analytics extension.

_________________________________________________________________________________________

Background

As briefly mentioned before, table extensions allow us to now generate entire tables directly from analytic extension scripts. Analytic extensions in Tableau have been around for a while. Which allows us to use external services like Python, R, Einstein Discovery, and other extension APIs with Tableau. Therefore opening up Tableau to perform other advanced analytics within its environment. Such as deploying predictive models.

Analytics Extensions

Until now, the analytical extensions would only be able to be saved as calculated fields by calling a particular script function. Below is an example of multiplying List Price and Quantity together within a script function calculation:

SCRIPT_REAL("_arg1 * _arg2", [List Price], [Quantity])However, we can only return a field or a value. Returning multiple columns from one script or generating tables was not possible until the introduction of Table Extensions. In order to connect Tableau and the analytics extensions APIs such as “Tabpy” for Python or “Rserve” for R, we will need to establish a connection between them. For more detail about how to set up this connection as well as supporting information about analytics extensions, take a look at Passing Expressions with Analytics Extensions. Also, some further examples on Getting Started with the Analytics Extensions API.

Table Extensions and Benefits

Instead of writing calculated fields integrating scripts, we can now push the scripts back to the data source and generate full tables. Integrating scripts this way results in faster data processing. Since each calculation made does not have to be computed separately. With Table Extensions, we also will not need to incorporate additional infrastructure to run codes and integrate them with Tableau. We can do it in the same environment.

On the data source, we can see a new draggable logical table object on the side panel names Table Extensions. We can drag this new logical table into our data model with a script that generates tables.

Usage

Of course, in order to be able to benefit from Table Extensions, there have to be some prerequisites beforehand. One of them is having knowledge of coding languages. This feature is not targeted at all Tableau users. But mainly to users who might want to integrate the ad-hoc nature of data analysis with Tableau with external scripts. The other prerequisite is the actual connection between the analytics API and Tableau. Follow this link to set up the connection if it is not configured in your environment yet.

If the prerequisites are met we can use Table Extensions now. As shown in the short video above, we drag the object into the data model first. For the following examples, I will be using the analytic extension of Tabpy.

The Interface

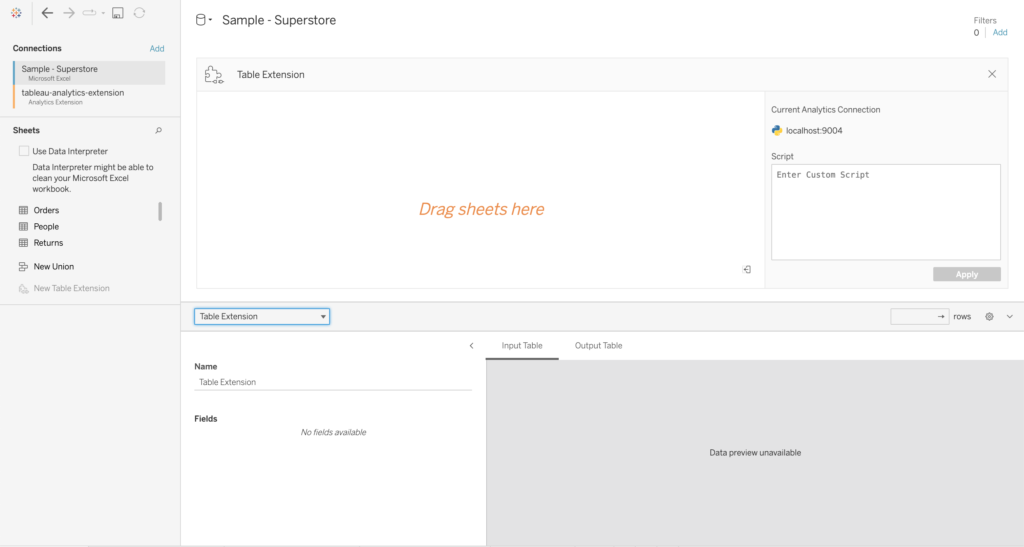

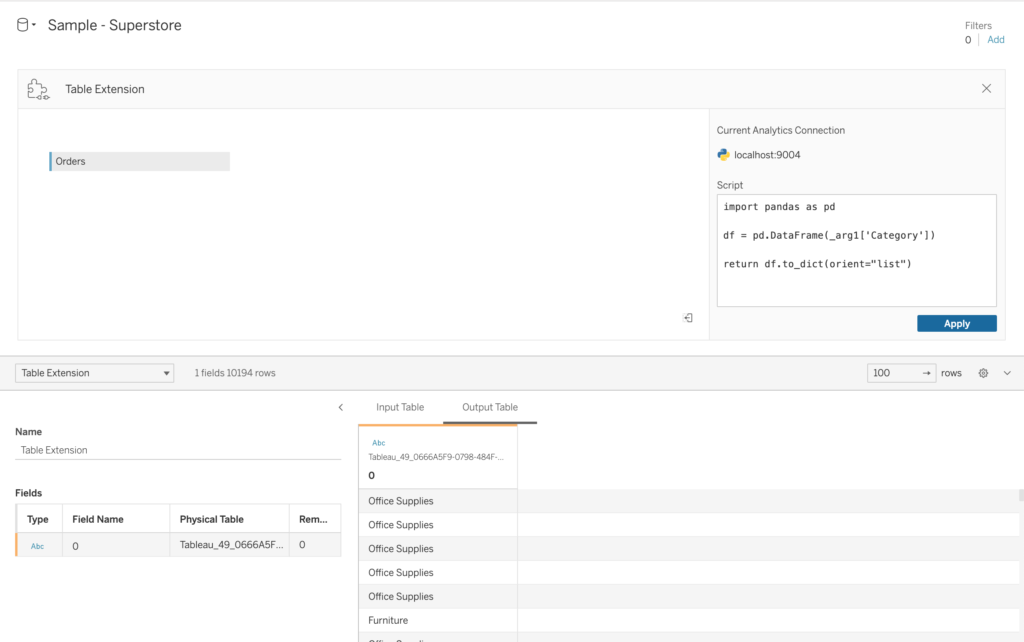

In the logical table, we are met with a view as shown below.

Within the table on the left side, we see the current connection type and the script text box below it. To the right, we see the area where we can include sheets from different sources to base the potential models that we will write in our script on. We can also perform joins in this area as well.

A very important element to note is that whichever table(s) are put in the area that says “Drag Tables Here”, is actually referred to as “_arg1“. This can be familiar if you have used analytic extensions as table calculations where the fields used in the script are referred to at args. Similar logic here as well. If we would like to call the whole table as it is, our script will be as follows (for Tabpy):

return _arg1The bottom area will show us the “Input Table” and the “Output Table”. The input will display a sample of the table we have put in the area. Meanwhile, the output will show us what the generated table looks like after we have passed the script.

The Difficulty in the Script Logic

The actual challenge for me while learning about the Table Extensions was from figuring out how Tableau interprets the data when the Table Extensions are configured. Also on how to adjust the script in order for Tableau to be able to interpret the script and return the correct schema. Current documentation about this is also limited at the moment, which made it harder to understand.

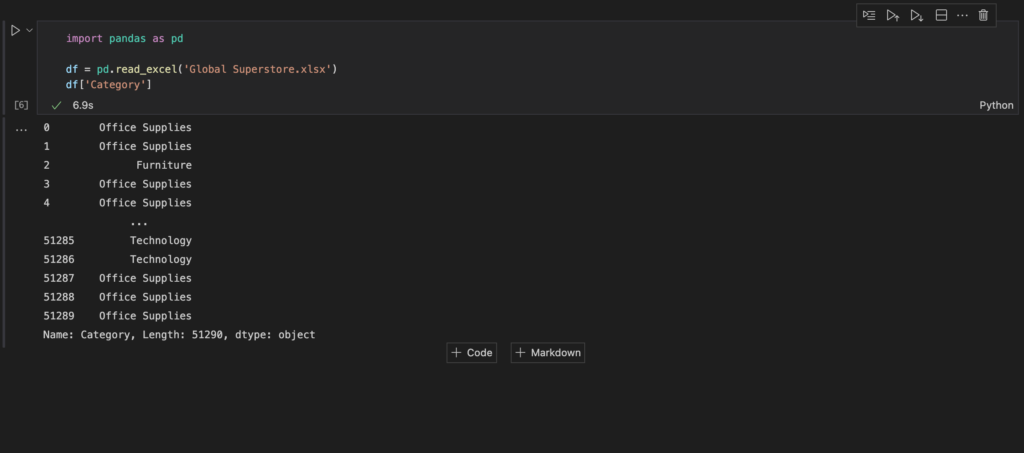

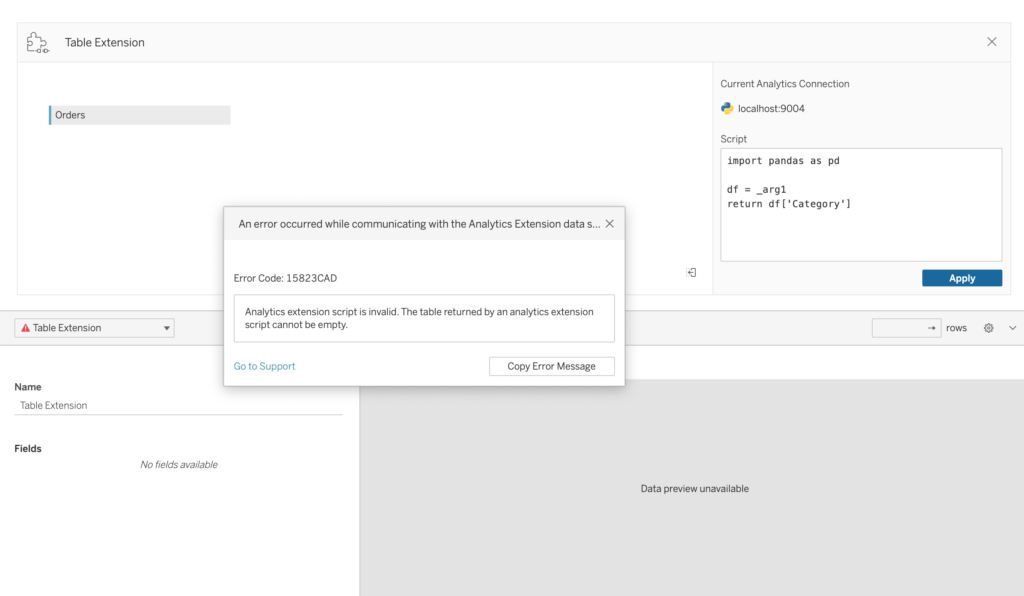

For example, if I want to return only one column from the whole dataset by writing a script like below, which normally works in other development environments, does not work in Tableau right away.

After some trial and error with some further research, I have figured out that the data sent to Tableau in this form is not being sent as tables or DataFrame. It is being sent as a dictionary. Therefore instead of being in a column and rows structure. It is in a key and value structure. Check out this source to know more about Dictionaries in Python.

Workaround

Since it is done this way, we will need to manipulate our code a little bit. What I found out that was the best way to do it for me is to actually first convert the _arg1 into a DataFrame. Do whatever I need to do with scripting and in the end, convert it back into a dictionary again. So taking the example from before, I will need to manipulate my code as such. Then I can return the “Category” column only.

If you decide to convert _arg1 to a DataFame first, then you will be able to do the same functionalities that you would do with DataFrames in Python in Tableau as well. But in the end, the return will need to be converted back.

df = pd.DataFrame(_arg1) #to convert the full table into a DataFramereturn df.to_dict(orient="list") #convert it back to a dictionaryThe rest is up to us on what we want to do with scripting. Let’s take a look at an example below.

Example

In order to demonstrate the Table extensions example, I will be using another example from Alex Fridriksson called: Sentiment Analysis Using Python in Tableau with Tabpy. In this example, Alex uses Analytic Extensions and saves the scripts as calculated fields. I will be demonstrating a transformation on how this example could be achieved using Table Extensions.

The example follows an implementation of a sentiment analysis script to determine the sentiment of the Billboard top 100 songs from 1964 to 2015. Check out the article for a more detailed overview of the example. The script in this example without table extensions is as so:

SCRIPT_REAL("

import nltk

from nltk.sentiment import SentimentIntensityAnalyzer

text = _arg1

scores = []

sid = SentimentIntensityAnalyzer()

for word in text:

ss = sid.polarity_scores(word)

scores.append(ss['compound'])

return scores

"

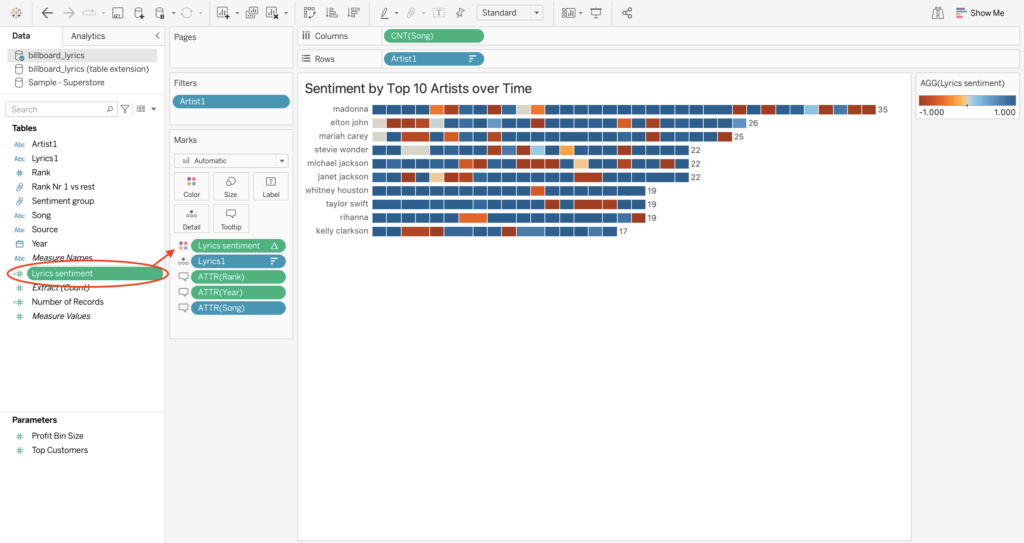

,ATTR([Lyrics1]))This sentiment calculation is later used as a color gradient in the following view where a positive or negative sentiment color is assigned to the top artists per song:

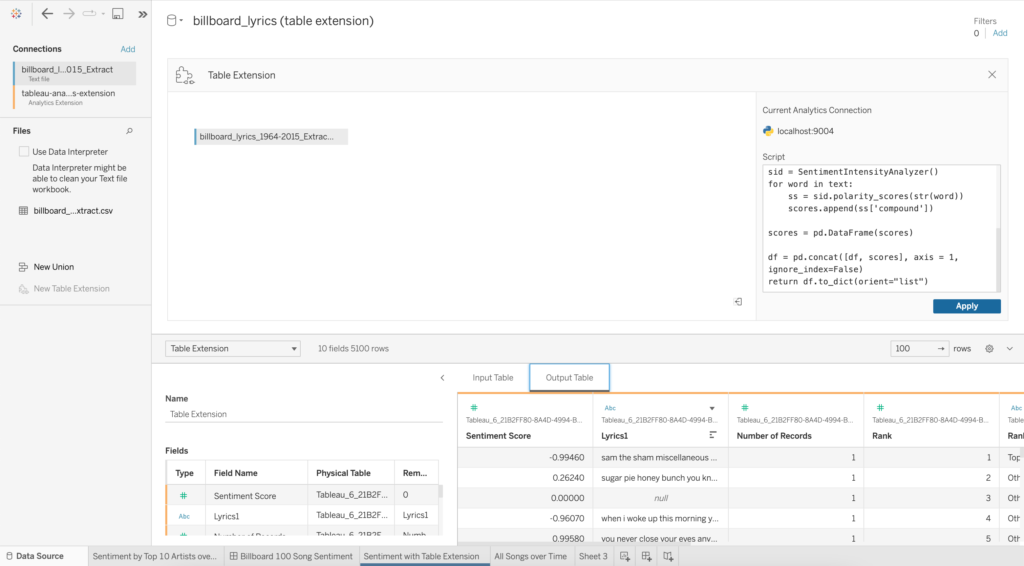

The goal is to already include this sentiment analysis already in the source table. With the use of Table Extensions, we can do this. First of all, I put the Table extensions table in the data model and put in the dataset the example used in it. After some adjustments to the script already written, I managed to add the sentiment score per row to the existing table:

The code I used in the Table Extension script is as follows:

import pandas as pd

import nltk

import json

from nltk.sentiment import SentimentIntensityAnalyzer

df = pd.DataFrame(_arg1)

text = df["Lyrics1"]

scores = []

sid = SentimentIntensityAnalyzer()

for word in text:

ss = sid.polarity_scores(str(word))

scores.append(ss['compound'])

scores = pd.DataFrame(scores)

df = pd.concat([df, scores], axis = 1, ignore_index=False)

return df.to_dict(orient="list")I am again first converting _arg1 into a DataFrame first. After that I apply the computation of the sentiment score into another DataFrame and concatenate them in the end, finalizing it by again converting it back to the dictionary.

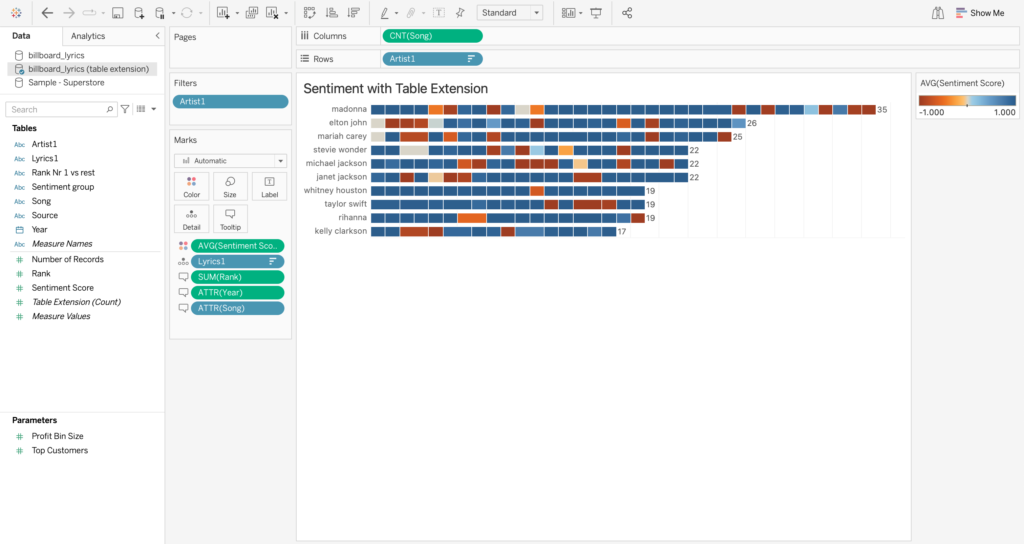

I can now rebuild the view with the sentiment score already computed. Which gives me the same results, but faster.

Final Remarks

Thank you for reading this blog. Also check out our other blogs page to view more blogs on Tableau, Alteryx, and Snowflake here.

Work together with one of our consultants and maximize the effects of your data.

Contact us, and we’ll help you right away.